So I’ve had this thought for a while that I need to get out there, if for no other reason that when I comes true I want to be able to look back and say “I told you so” to no one in particular.

The “generative” part of generative AI is going to be implemented into media, and we are going to go through the entire history of movies and video games all over again, but with AI. Let me explain.

In 1977, Atari came out, and graphics looked like this:

In 1985, Nintendo came out, and the graphics looked like this:

In 1996, Nintendo 64 came out, and the graphics looked like this:

In 2006, Playstation 3 came out, and the graphics looked like this:

In 2013, Playstation 4 came out, and the graphics looked like this:

In 2020, Playstation 5 came out, and the graphics looked like this:

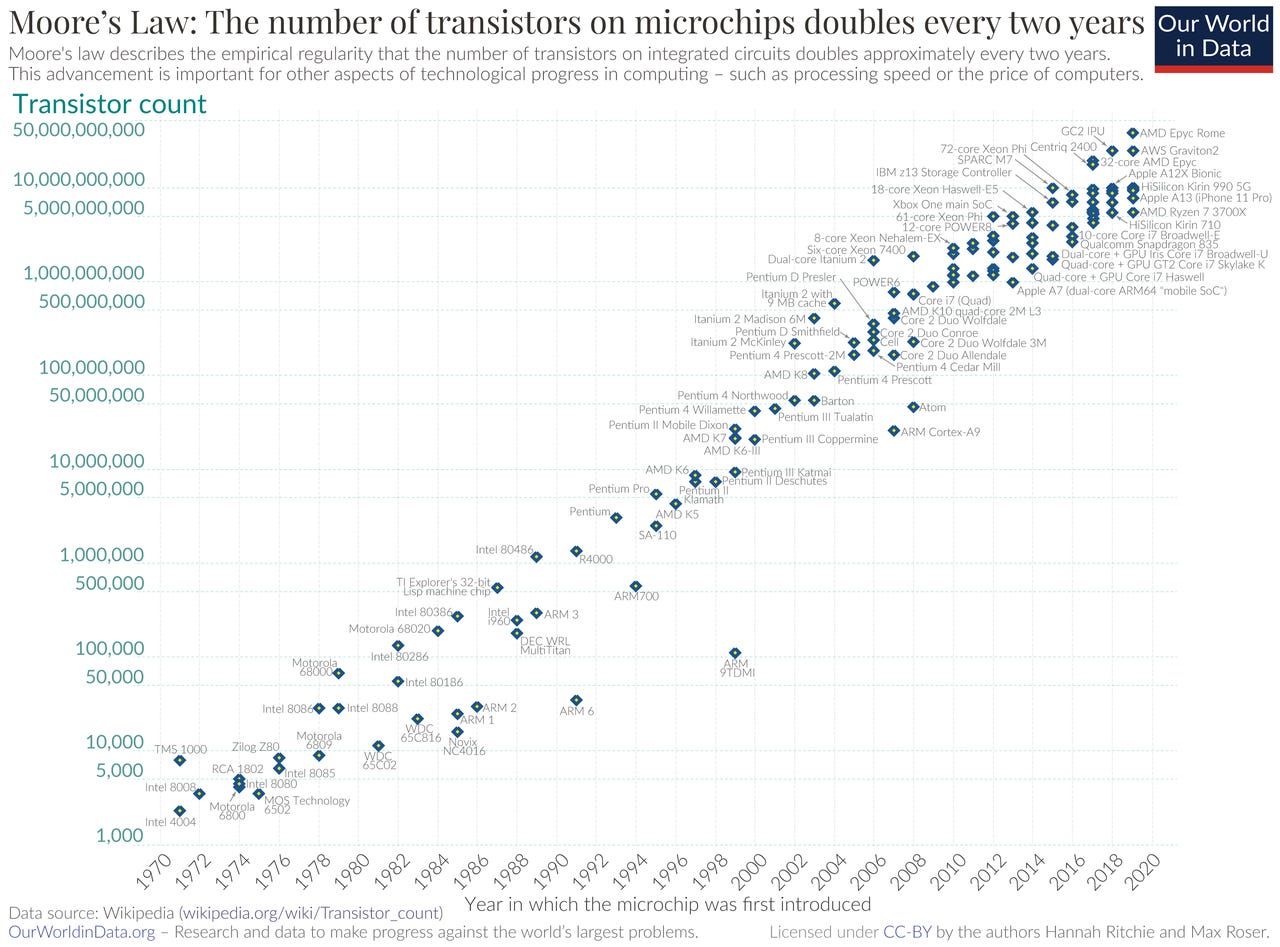

Most people are familiar with it, but there is something called Moore’s Law, which shows that the density of compute power doubles every two years. That’s what has enabled more and more powerful chips inside smaller and smaller devices. And more powerful chips mean better graphics for video games.

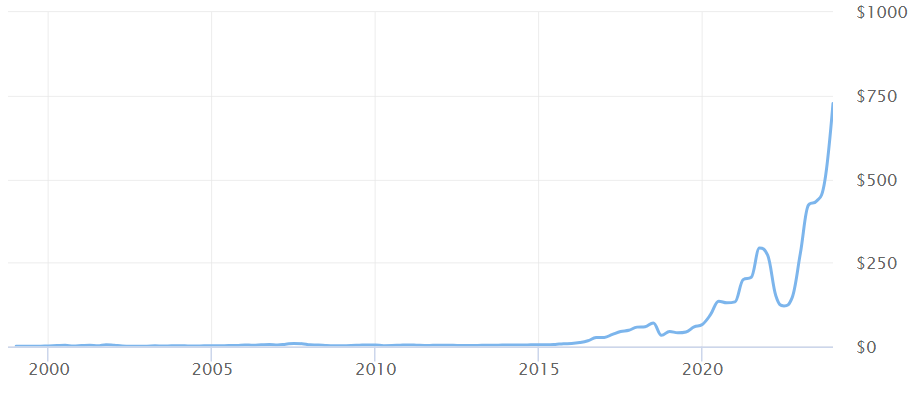

Straight from OpenAI’s research page, we know that the speed of improvement is happening in AI at a doubling every of 3.4 months.

This pace of development is outpacing Moore’s Law.

But, all of this learning that the AI is doing is still coming from compute. And the compute has to be computed through some sort of hardware, and because NVIDIA makes the best hardware, that’s why their stock has been through the roof lately.

So with all this crazy technology and compute power, kids are probably playing some pretty insanely detailed games, right?

Well, no. They are playing Minecraft.

Something that looks like it could run on an Atari in 1977.

We’re actually seeing a lot of homages to the past lately.

Vintage clothing is becoming more popular, thrifting is cool

Having blurry or low-res photos on your Instagram

RFK’s Superbowl ad

Volkswagen’s Superbowl ad

This black-and-white Porsche Taycan billboard I snapped from the freeway

Fashion is always cyclical, and odes to Americana are nothing new with politics and car commercials, but take a moment to consider the things in your day to day life that make you feel like the older version was better, or that a new model is unnecessary (cough, smartphones, toasters, microwaves). This could be a whole separate article, but the quality and craftmanship of things has plateaued with the hyperconsumerization that we have bestowed upon everything.

In any event, the kids are playing Minecraft. A game that is objectively sub-par in graphics, but makes up for it in replayability due to its infinite customization of maps, and objectives, and the fact that you can just run around and explore worlds with your friends. Kids are opting for multi-player Atari where you can generate your own character, setting, game.

Rosebud.ai is a startup that I think is kicking off the next generation of gaming, which is generative-gaming. If I was a VC I would write this company the fattest check.

Like I wrote about a year ago when describing Andre Karpathy’s (former head of AI at Tesla) new way to code now that Copilot has been invented.. “just prompt, and edit”, kids playing games will have the same sensation.. Just prompt, and play.

The deep human element is all of this is that at its core it is just storytelling. Storytelling is the best way to get a point across, whether it is sharing the oral history of your tribe, or trying to sell your product to a room full of people with a PowerPoint. People remember stories, they don’t remember isolated facts and figures. When world-record holding memory experts have to remember an entire deck of cards in order, they resort to mnemonic devices for each card, but to string the cards together requires a story. This is why games are powerful, this is why books, movies, politics, social media, and sports are powerful. They are stories.

Right now only a few people on this earth can tell stories, because the tools require to tell a story to a mass audience have high learning curves (or capital requirements). It costs a lot to make a movie, it takes a lot of talent to make a video game, it takes a lot of time to write a novel. Social media has democratized some of this, but still talent is required to hold people’s attention, and the bar is pretty low in terms of what will captivate an audience right now.

But we have Midjourney which can generate images from a prompt. We have RunwayML which can turn those images into videos. And just announced we have Sora, OpenAI’s text to video model that can allow users to prompt a video.

The issue blocking all of these products from insane levels of productivity is compute. Midjourney takes 60 seconds to produce a hi-res image. Runway lets you produce about 200 seconds of video per month on its basic plan. Sora isn’t out yet. Rosebud allows for game generation, but not quite in real time, and in Minecraft-esque graphics. As Moore’s Law continues upward, and AI’s exponential continuation on top of that, things should escalate quickly. Even though some say that Moore’s Law is dead, or has tapered off, there are still different types of processors being created specifically for AI training, and inference (the process in which the AI makes predictions off never before seen data). This will branch into a whole new category (or many categories) of processors and other hardware built specifically for AI.

The net-net of the situation is that the power of the compute is going up, as the cost of energy is coming down. What this yields is more generated content, faster, and of higher quality, for cheaper.

And so, we are about to go through another phase of graphics renaissance, like I displayed above, but in each one of those iterations (Atari, NES, N64, etc), the user is going to be prompting or generating content on the fly. Now this doesn’t have to be relegated just to gaming, it applies to any sort of medium where there is performance art, or storytelling, or just plain visual or auditory sensation happening.

Imagine an expert prompter creating a movie on the fly to an audience full of movie-goers the way an expert conductor would conduct an orchestra.

Imagine a game that doesn’t have prebuilt worlds, but every door you open in it is a unique experience catered exactly to the nature of its player.

It truly is a new platform from which to build off, as the user entering the domain as more of an active participant rather than bystander could produce all sorts of new ways to tell stories.

The return to vintage is in part due to this plateauing of technology, and in part cyclical in nature, but it is also serendipitously priming us to begin a new evolution all over again. I remember being a kid and being blown away by each console’s upgrade of graphics over the previous generation. We are now back at that square one, but with AI. It will be exciting to go through all the iterations, again, for the first time.