The AI Article

The Inverted Value-add Pyramid, and Dueling Gödels

G’day mates.

I’m not Australian but I do enjoy diversifying my salutations. In fact, I enjoy diversifying a lot of things in my life. I can’t eat the same meal every day. Actually, I can’t even cook the same meal the same way twice. I always have to be tweaking, testing, optimizing. I don’t like to watch movies more than once (except for a select few). I have automated the majority of my day job, but there are always little design adjustments, and if not enough things change, or if little inefficiencies build up, I am wont to tear things down and rebuild them in entirety.

In short, I am human. I have a personality type, which (on brand for me) seems to change every time I take the test. I’m a performer with some, and an observer with others. Some people are not as inconsistent as me. I am sure many of you read the first paragraph and thought “how horribly dreadful” to have such a lack of structure. Some people meal prep and eat the same exact thing every day as it gives them the small comfort of saving time, money, or they do it for health reasons, or it saves mental energy (like how Zuck famously told people he wears the same T-shirt every day so he can spend his precious focus on more important things). We all do weird shit.

This article is about AI, but we really can’t talk about AI without talking about humans. Humans are the actual I, and it’s important to remember this because it helps us configure in our minds how we can use AI better, who is going to get the most out of it, who it is going to get hurt the most, and where it could potentially be going. Humans not only have different personality types, but they have different levels of motivation, cognition, and communication.

Getting the most out of AI right now is more akin to computer programming than it is talking with a really smart human who’s brain is connected to the internet. You have to craft the right syntax, form the proper boundary conditions, and constantly iterate. The early hype of ChatGPT is causing people to coin terms like “prompt engineers” (people who have a high level of proficiency getting what they want out of the machine), and “magic spells” (a certain sequence of prompts, kept secret, which again gets the machine to do as you command). Both of those things can be likened to computer code, which makes sense because this is nothing more than a program built by programmers, for programmers. Which leads to the most impactful part of AI at the moment.

Andrej Karpathy was Sr. Director of AI at Tesla, and head of the computer vision team at Tesla Autopilot, leading the Full Self Driving project. He is also a founding member of OpenAI, the group that built ChatGPT. Essentially he is one of the premier minds in AI and neural network training, and here he is saying that GitHub Copilot is writing 80% of his code. He just prompts, and edits.

What an amazing unlock! Like our education system, or an NBA team, AI gives a disproportionate advantage to those who are already talented. We don’t need to debate “trickle down economics” here, the point is that there is an inverted pyramid of value-add that AI delivers. The most productive people in society will become more productive, but that is not to say there is no value-add down the chain, it’s just like like most things, you have to put a little bit of work in to crest that activated complex. For expert coders, it might be a 10^10 force multiplier, for basic engineers it might be a 10^2, but for those just starting out, it can get you from 0-1, which might actually be the biggest unlock of all, depending on how many choose to open that door, and what they do with that power. A million people going from 0-1 is gigantic, but still pales in comparison to one person going 10^10.

There is an inverted pyramid of value-capture to utilizing AI, the most productive people in society will become more productive.

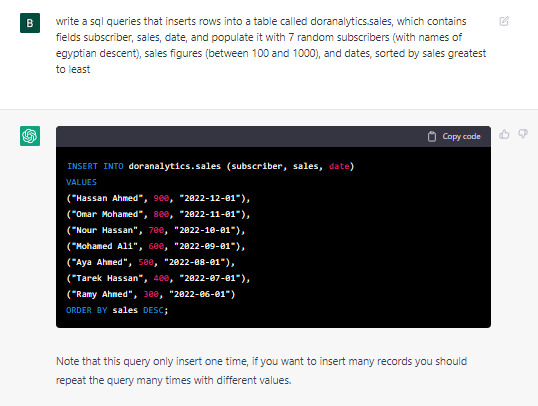

The daunting part about code is the syntax. Like AP or MLA formatting from grade school, code has to follow a rigid structure in order for it to work. It’s not a “natural” language. Fortunately, natural language models is exactly what OpenAI is training on. The 0-1 function works because it can not only tell you how to get started (by where to insert the code), but what the code actually should be:

Then when you have an idea on what the coding language can do, your prompts will become structured. You can then give it natural language direction, and it can translate that into the more rigid code structure (with the added bonus of being able to copy the code right out of the app):

As I have quoted from Naval Ravikant many times, to generate wealth you need leverage, and there are 4 modern ways of creating leverage: labor, capital, media, and code. AI language models are powerful transformers that give instant leverage to anyone willing to wield it.

Which is a nice segue into media. The biggest trend that is obvious to most everyone is that the cost of content was already approaching 0. As I had written in The Creator Economy, Hollywood has gotten so watered down, and the quality of made-at-home TikToks has gotten so good, that it doesn’t make sense for Hollywood to keep investing so much money into production when kids are doing it for free. At some point the margins will be so thin that big production companies will have to consolidate around a few franchises that make enough money to fund passion projects (oh wait..).

Media has a lot of different mediums but the key takeaway here is the leverage is democratized, which means that spammers have access too. Think of how much “content” is actually spam. All the engagement-bait Twitter threads, the articles that say “One Weird Ancient Secret to Lost Belly Fat”, and basically anything out of the mouth of a member of Congress. Forget about advertising, forget about what is fact or truth, I am merely talking about content that is meant to be a creative piece of work. It’s been commoditized, and now it has the ability to be weaponized.

Here is a quick short story I had ChatGPT whip up with a simple prompt:

Authors stand to get paid via advertising, or subscribers, or their employers and they are under pressure to produce things day in and day out. How easy is it to lean into a little performance enhancing drug?

Most content exists on the internet as a front for some kind of advertising anyway, (the Original Sin of the Internet) so AI is another huge unlock for marketers. Here in one prompt, ChatGPT creates a brand, a SKU, a slogan, and full blown copy for an ad. I could generate hundreds of thousands of these ideas, shop them instantly around the internet looking for a celebrity sponsor.

What’s more, is you can use Midjourney to perform text-to-visuals. Here I described a simple command to create the Macho Mane product and I am given 4 options:

I can choose one of the options to create more variations of:

And I can upscale the imagery:

Now you may be thinking, that ad sounds stupid and no one would every buy that product. I would argue that there are way worse ads and products out there written by humans that went through a lot more scrutiny than this. Also, I literally created this entire shampoo brand in the morning before work as my eggs cooked (I slow-fry my eggs over easy in olive oil on cast iron so it takes ~20 mins), which included signing up for Midjourney, figuring out how to use it, while writing this article at the same time. So imagine this being done at scale, with purpose. Plus, as Hollywood showed us with the commoditization of streaming video content, an abundance of shitty supply can drown out the signal from the quality stuff. Said another way, these ads, and this content, and these brands, are going to so easily spam us all, that it is going to be hard to find that quality signal. Not for lack of trying, or lack of recognition, but through sheer volume of junk. Quality stuff might not ever cross your feed.

On the positive side: this creates a reenergized need for curation. As I talked about in The Age of Authenticity, consumers are gravitating towards humans they feel aligned with. However, AI makes it easier for those humans to launch brands, and create content, potentially watering down the signal we worked so hard to find, and the creators worked so hard to develop. The Saga Continues.. Wu-Tang.

So are we doomed to endlessly doomscroll through doomsday news feeds fattened with AI generated ads for worthless tchotchkes? Would that be so different from where we already are? Looking over the horizon, I think this unlocks media for those with existing skills the same way it does programmers.

Think of a director who knows exactly how he wants a scene shot. He is an expert communicator. He says a thing that doesn’t quite make sense, but the actor interprets it in his own way, and nails the scene without really understanding what the instruction accomplished. Like with coding, the visionaries who can see over the horizon, who see the finished product and are working backwards, are the ones who generate the most leverage. So, like with coding, a million people going 0-1 entering the arena and spamming up the joint pales in comparison to a true artist taking his art 10^10. Remember, the origin of the Intelligence is always human.

What does 10^10 art and media look like? Well, it is likely something that we can’t even conceive of yet. I could conjecture about integrations of time, space, mediums, perceptions, senses, and emotions, but like with every period in art history there is the shock of the new. Things are presented in such a way that evoke feelings we have never had, but in order to experience those things, someone has to imagine them.

Midjourney prompt: /imagine a man experiencing all emotions at once: sadness, frustration, joy, excitement, fear, enlightenment. Make it photo realistic, encompassing nods to both post-modernism and ancient classical art.

Does this simple prompt evoke Caravaggio levels of emotion? Of course not, but I am merely going 0-1, creating something from where there was nothing. Surely Caravaggio could pluck the prompt strings in such a way as to arouse a more sophisticated vibrato.

OK, so what about everything else? Most jobs aren’t coding and media, they are boring forms, wonky systems, human processes wrought with human errors. Progress is stifled by human knowledge, human motivation, human ego, and human politics. Like computers in the 80s, AI can be industry agnostic. It will affect different roles in different sectors in different ways. There are too many sectors of the economy to go down a rabbit hole on each one (ChatGPT passed the bar, and the CPA is next), so instead I will generalize what I think it will and won’t do in the immediate future.

The two most popular articles I have written over the past year, as judged by the amount of new subscribers they generated, Middlemen America, and America’s Trade Problem, both revolve around the same general theme: there are doers and don’ters in this world. Let me be perfectly clear: AI is not coming for your job, but a more motivated human utilizing AI is. If you are equal to another in your knowledge and productivity, and they are supplementing their knowledge and productivity with AI, you will lose to that person.

AI is not coming for your job, but a more motivated human utilizing AI is.

Imagine you have two athletes, both have the same physique, endurance, bench press, 40 yard dash, vertical leap, hand-eye coordination, and you throw them into a basketball game. One of them knows the rules and the other does not. Obviously the one who knows is going to be infinitely more successful, as long as the score is being kept within the structure of the game. Because the rules of society exist within a framework, be that the laws of physics, the legislative system, the financial system, participants have to play within that structure. That is not to say that the structure cannot be expanded or modified, but those events are extremely rare. AI is so useful at explaining this structure, the rigidity that we all hate yet are prisoner to, that by outsourcing it to AI, it allows humans to do what they do best: be creative and solve problems.

I have a friend to likened this to Gödel's incompleteness theorems. Gödel's incompleteness theorem is a concept in mathematics and logic that states that it is impossible for a logical system to prove all truths within itself. Pretend the logical system is a game. You can use the rules of the game to determine the outcome of certain actions within the game. But what if there are some outcomes that can't be determined just by following the rules? That's what the incompleteness theorem says - there will always be truths that can't be proven to be true just by using the rules of a logical system.

In other words, no matter how complete a logical system may seem, there will always be some truths that can't be proven or disproven by that system. This means that there are limits to what we can know and prove, even in the world of mathematics and logic.

AI is the system, and there are truths within that system that it can’t itself prove. Human ingenuity is required. That being said, the inverse is also true. Humans exist in a system that contains truths they cannot themselves prove. AI is required. Thus the symbiotic relationship between man and machine is beginning to evolve out of it’s v0.1 phase and into a true beta version. The dueling incompleteness theorems can expand the pie, but trying to step outside of the pie will create a sort of circular reference issue.

Does this mean that I do not believe that AGI is possible? I am a techno-optimist, so I don’t rule it out as a possibility, but beyond the dueling Gödels, and having the computer truly learn and create on its own (which is quite a big deal), there is the energy issue. As you have read in previous articles, my pet theory of the world is that all issues can be boiled down to energy and education. AI is no different. Human ingenuity can create a more powerful AI that can create a more knowledgeable person, so that is the education perspective. The energy issue is that in order for these models to learn, they take an incredible amount of computing power. By many reports, OpenAI is paying upwards of $3M per day to allow ChatGPT to be free in the world, and this is at the speed of Midjourney taking 60 seconds to make a small image. The electric power required to expand beyond this is quite astronomically expensive. As the price of energy goes, so does our ability with compute. For now, we are limited to our simple system.

I’ll leave you with a final thought, which shows my optimism for technology and humanity:

Crypto in its current form is like a crude Stone Age tool for digging holes, or hunting animals. AI is like a piece of flint, used for shaping tools, or sparking fire It’s the year 10,000 B.C.

Substack doesn't yet have an highlight feature, but here are two passages that stood out to me:

> A million people going from 0-1 is gigantic, but still pales in comparison to one person going 10^10.

> AI is not coming for your job, but a more motivated human utilizing AI is.